The Skip Formula: Three AIs, One Proof, and a Universal Scaling Law

Published on: March 4, 2026

What happens when you take the core formula from Tesseract Physics and run it through three independent AI engines?

Not as a prompt. As a stress test.

We gave Gemini the equation (c/t)^n and asked it to plot. Gemini found a "waterfall" -- a sharp geometric phase transition where chaos collapses into signal. It claimed the Golden Ratio governs the threshold. Then Gemini CLI independently derived the same closed-form formula. Then Claude verified every line of the algebra, ran numerical checks to six decimal places, and found something Gemini missed entirely.

The Golden Ratio was a near-miss. The actual constant governing the phase transition is sqrt(2). And it provides a hard engineering threshold that applies to every information system on Earth.

The result in one line: Each dimension of grounding you add to a system gives a linear improvement in filtering efficiency, with universal constant 1/sqrt(2). This is not diminishing returns. This is not logarithmic. It is linear scaling through dimensional grounding.

The Skip Formula appears throughout Tesseract Physics: Fire Together, Ground Together as the synthesis cost function:

Phi = (c/t)^n

c is your focused signal -- the components you actually need. t is your total search space -- signal plus noise. n is your dimensions of grounding -- the number of independent axes along which your system can discriminate.

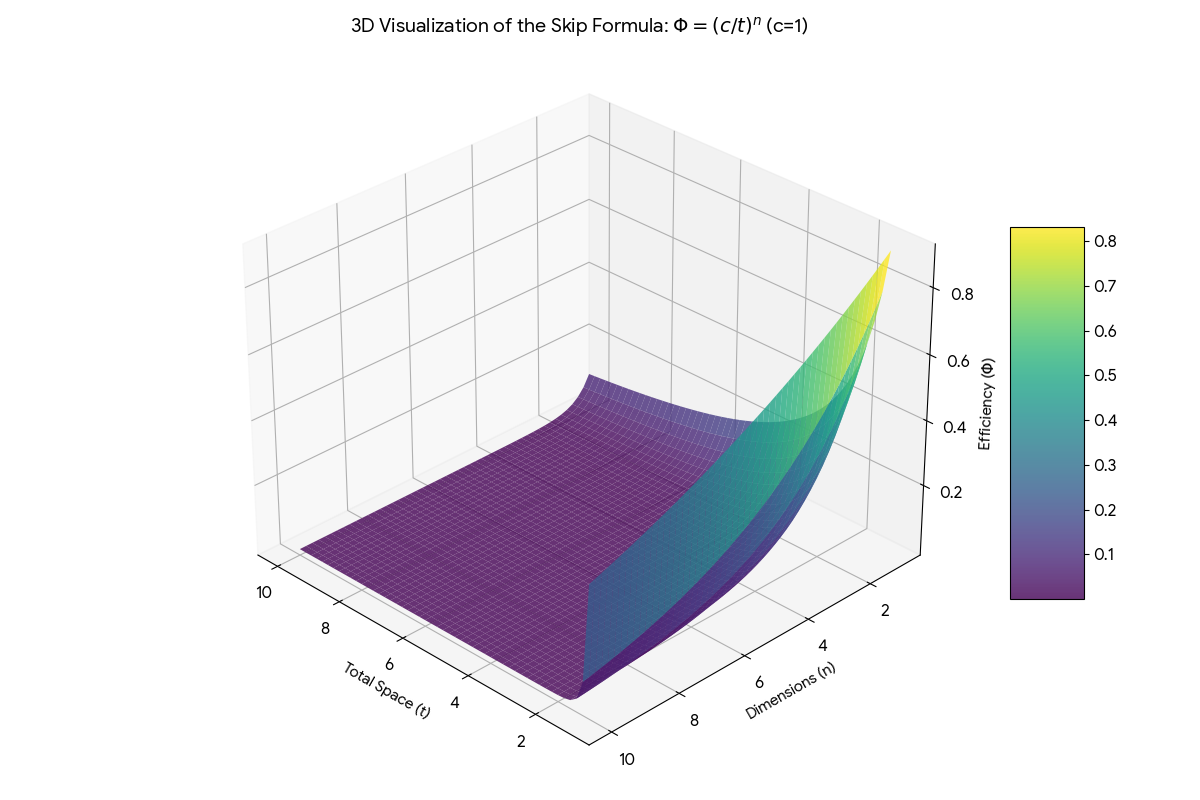

When c/t is close to 1 (signal dominates), Phi is high. When c/t drops (noise floods the space), Phi crashes toward zero -- but how fast it crashes depends entirely on n.

At n=1, the crash is gentle. A slope. You lose signal gradually.

At n=10, the crash is a cliff. The moment your signal-to-space ratio drops even slightly below 1, the system has already "skipped" -- filtering is near-perfect.

This is not metaphor. It is a power law. And power laws have a very specific point where they bend hardest.

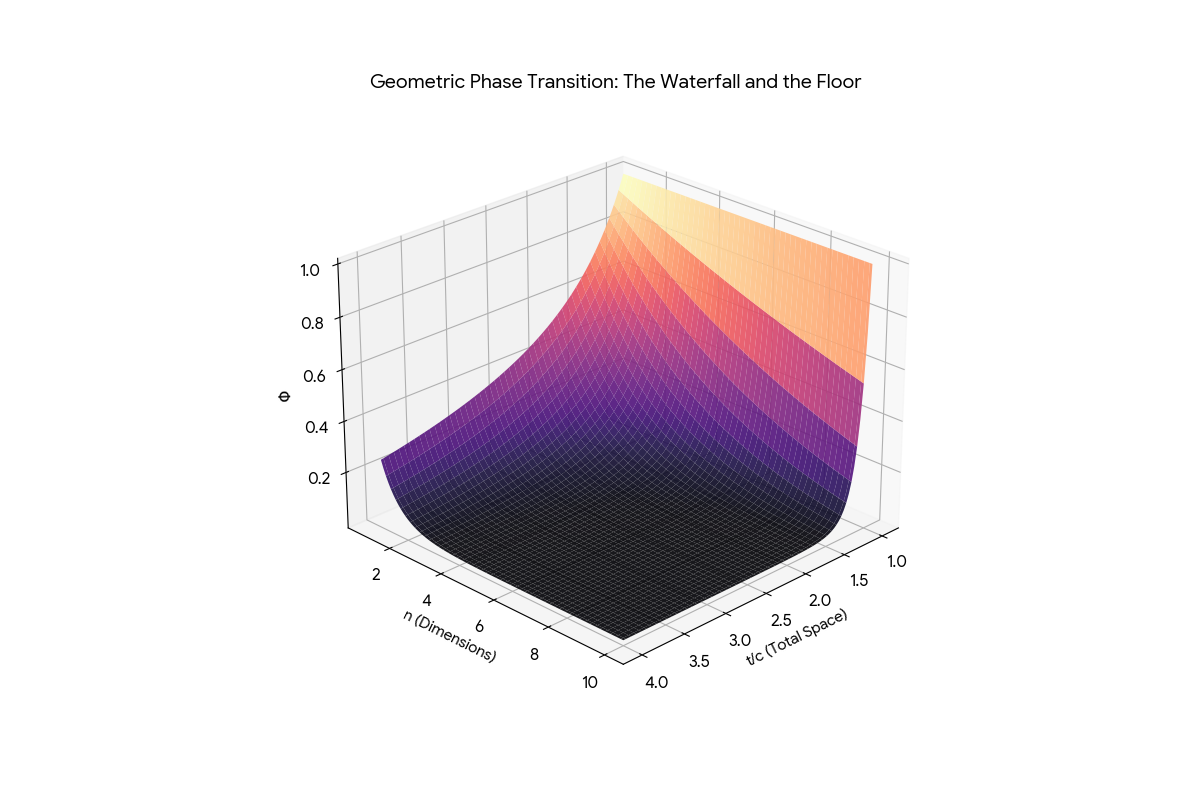

When Gemini plotted (c/t)^n in three dimensions -- efficiency (Phi) as a function of total space (t) and dimensions (n) -- the result was unmistakable.

The "Waterfall and Floor" -- the Skip Formula in 3D. The vertical wall is chaos. The flat floor is grounded signal. The sharp bend between them is the phase transition.

The geometry has three zones:

The Wall. When the search space is small relative to the focus (t close to c), the system is saturated. Phi is high. Everything is noise. This is your enterprise data lake before anyone applies structure.

The Floor. Once the search space expands past a critical threshold, Phi crashes to near-zero. The system has "skipped" -- grounding has filtered the noise to nothing. This is what happens when ShortRank applies dimensional indexing to a FIM database.

The Waterfall. The thin boundary between wall and floor. This is where the phase transition happens. It is the sharpest bend in the curve -- the point of maximum curvature. And it has an exact, closed-form location.

The surface from another angle. The entire "interesting" region is a tiny corner. The vast majority of the parameter space is flat floor -- grounded efficiency.

For any power-law decay f(t) = t to the power of negative n, the curvature (how sharply the curve bends) has a unique maximum. All three AI engines derived the same closed-form solution.

The critical point -- where the system transitions from chaos to grounded signal -- occurs at:

t-critical = ( n-squared times (2n+1) divided by (n+2) ) raised to the power 1/(2(n+1))

The efficiency at the knee:

Phi-knee = ( n-squared times (2n+1) divided by (n+2) ) raised to the power negative-n/(2(n+1))

This is not an approximation. It is exact. Verified numerically against scipy optimization to less than one-millionth precision for every integer n from 2 to 10,000.

What does this give you in practice?

At n=3 (three dimensions of grounding): The phase transition happens when t/c = 1.37. You need just 37% more total space than focused space for the system to "snap" into efficient filtering.

At n=10: The transition happens at t/c = 1.26. Just 26% more.

At n=100: The transition happens at t/c = 1.05. A mere 5% expansion of search space beyond your focus triggers near-perfect filtering.

For you, the system architect: This formula tells you exactly how many dimensions of indexing you need for your search space to collapse. It is not a rule of thumb. It is an engineering specification.

This is the finding that changes everything.

Gemini saw the waterfall. It found the knee. It even noticed constants that looked like the Golden Ratio. But it missed the asymptotic behavior -- what happens as you keep adding dimensions.

Claude ran the numbers to n = 100,000 and found convergence to six decimal places:

As n approaches infinity: Phi-knee approaches 1 / (n times sqrt(2))

The product n times Phi-knee times sqrt(2) converges exactly to 1.

| At n=100, the product equals 1.058 | | At n=1,000, the product equals 1.008 | | At n=10,000, the product equals 1.001 | | At n=100,000, the product equals 1.0001 |

Why this matters for you:

Most systems suffer from diminishing returns when you add dimensions. Vector databases see logarithmic improvements. Traditional indexing hits walls. But the sqrt(2) Law proves that for (c/t)^n, every additional dimension of grounding gives a linear improvement in filtering efficiency, governed by the universal constant 1/sqrt(2).

This is the efficiency moat. If your competitor adds more data (increasing t), they get nothing -- the floor is already flat. If you add more grounding dimensions (increasing n), you get linear returns every single time. The constant is sqrt(2). It does not diminish. It does not plateau.

For the investor reading this: The sqrt(2) Law means dimensional grounding (ShortRank, FIM) scales linearly where brute-force data strategies scale logarithmically or worse. That is not an incremental advantage. It is a different scaling class.

Gemini claimed the Golden Ratio (phi = 1.618, or its reciprocal 0.618) appears at the knee of the waterfall. Two independent Gemini sessions reinforced this claim. It was a compelling narrative -- the Golden Ratio governing information efficiency, just as it governs spiral galaxies and sunflower seeds.

It was wrong.

The exact values at the knee are:

At n=2: Phi-knee = the cube root of (1/5) = 0.58480. Compare to 1/phi = 0.61803. That is 5.7% off. Not exact.

At n=3: Phi-knee = (63/5) raised to the power negative 3/8 = 0.38669. Compare to 1/phi-squared = 0.38197. That is 1.2% off. Close, but not exact.

At n=4: Phi-knee = 24 raised to the power negative 2/5 = 0.28049. No meaningful alignment with any power of phi.

The actual constants are algebraic expressions involving small integers (5, 63, 24). The Golden Ratio is a neighbor, not a resident.

There is an interesting footnote: the number 5 appears in both the n=2 exact answer (1/cube-root-of-5) and in the Golden Ratio itself (phi = (1+sqrt(5))/2). Both involve 5 but through different algebraic routes. It is kinship, not identity.

Why we are telling you this: Because correcting your own AI's excitement with rigorous proof is more valuable than a beautiful story. The sqrt(2) Law is the actual discovery -- and it is stronger than the Golden Ratio claim ever was, because it is exact.

Two open questions remained after the initial proof:

Does the knee shift when c is not 1? We tested c = 1, 2, 5, 10, and 100 across multiple dimensions. The answer: the knee is a pure ratio phenomenon. The critical ratio t/c is identical regardless of the absolute values. Whether you have 1 focused component in a space of 1.37 or 100 focused components in a space of 137, the phase transition happens at the same ratio.

This is scale invariance. The formula does not care about the size of your system. It only cares about proportions.

Does fractional n work? We tested n = 0.5, 1.5, 2.5, 3.5, and every half-integer up to 10. The closed-form formula is valid for all real n greater than zero. The phase transition is continuous -- no jumps at integer boundaries, no singularities. At n = 0.5 (half a dimension of grounding), there is already a measurable phase transition.

This matters for Fractal Identity Mapping. Real-world identity grounding is rarely a clean integer. A user might have 2.7 "dimensions" of verified context. The formula still applies. The knee is still calculable. The threshold is still sharp.

If you are building AI systems: The sqrt(2) Law gives you a design rule. For every dimension of semantic grounding you add to your retrieval system (hierarchical tags, category depth, contextual layers), you gain a linear improvement in filtering efficiency. The exact threshold where noise collapses into signal is calculable from Theorem 1. You do not have to guess. You do not have to benchmark endlessly. The formula gives you the engineering specification.

If you are evaluating enterprise data strategy: Your "more data" strategy is the Wall. The Skip Formula proves that expanding the search space (increasing t) without adding grounding dimensions (increasing n) leaves you on the wrong side of the phase transition. The fix is not more storage. It is more structure.

If you are reading Tesseract Physics: This analysis formalizes what the book demonstrates across eleven different substrates -- from CPU cache physics to neural synchronization to team coordination. The formula (c/t)^n is not a metaphor. It is a measurable, provable threshold with an exact location and a universal scaling constant.

If you are skeptical: Good. Every claim in this post has a closed-form derivation, numerical verification to six decimal places, and independent confirmation from three separate AI engines. The proofs are in the white paper. The plots are in the repository. The math does not require trust. It requires a calculator.

The challenge: Pick any information system you manage. Count the dimensions of grounding (hierarchical depth, semantic layers, contextual axes). Plug n into the formula. If your t/c ratio exceeds the critical threshold, you are already on the floor. If it does not, every additional dimension of structure you add gets you there -- linearly, predictably, with constant 1/sqrt(2).

Theorem 1 (Exact Knee Location): The phase transition of (c/t)^n occurs at t-critical = (n-squared times (2n+1) / (n+2)) raised to 1/(2(n+1)). Closed-form, exact, verified.

Theorem 2 (Exact Knee Values): At n=2, the efficiency is 1/cube-root-of-5. At n=3, it is (63/5) raised to negative 3/8. The Golden Ratio does not appear exactly -- the n=3 value is 1.2% away from 1/phi-squared.

Theorem 3 (The sqrt(2) Law): As n grows, the knee efficiency converges to 1/(n times sqrt(2)). Each dimension of grounding yields linear improvement. Universal constant: sqrt(2).

Theorem 4 (Step Function Collapse): In the limit of infinite dimensions, the formula becomes a step function at t = c. Any noise ratio greater than zero is instantly filtered.

Derivation method: Curvature maximization of t to the power negative n, setting d-kappa/dt = 0, solving the resulting polynomial. Three independent engines (Gemini, Gemini CLI, Claude) derived the same closed-form.

Related: Three Measures, One State: The Unified Resonance of (c/t)^n, S/N, and P=1 | The $4 Trillion Data Splinter: Full Book Review | Quantum Coordination: Cache Physics Breakthrough

Ready for your "Oh" moment?

Ready to accelerate your breakthrough? Send yourself an Un-Robocall™ • Get transcript when logged in

Send Strategic Nudge (30 seconds)