The Zone Boundary: When a Waterfall Settled the Superintelligence Debate

Published on: March 4, 2026

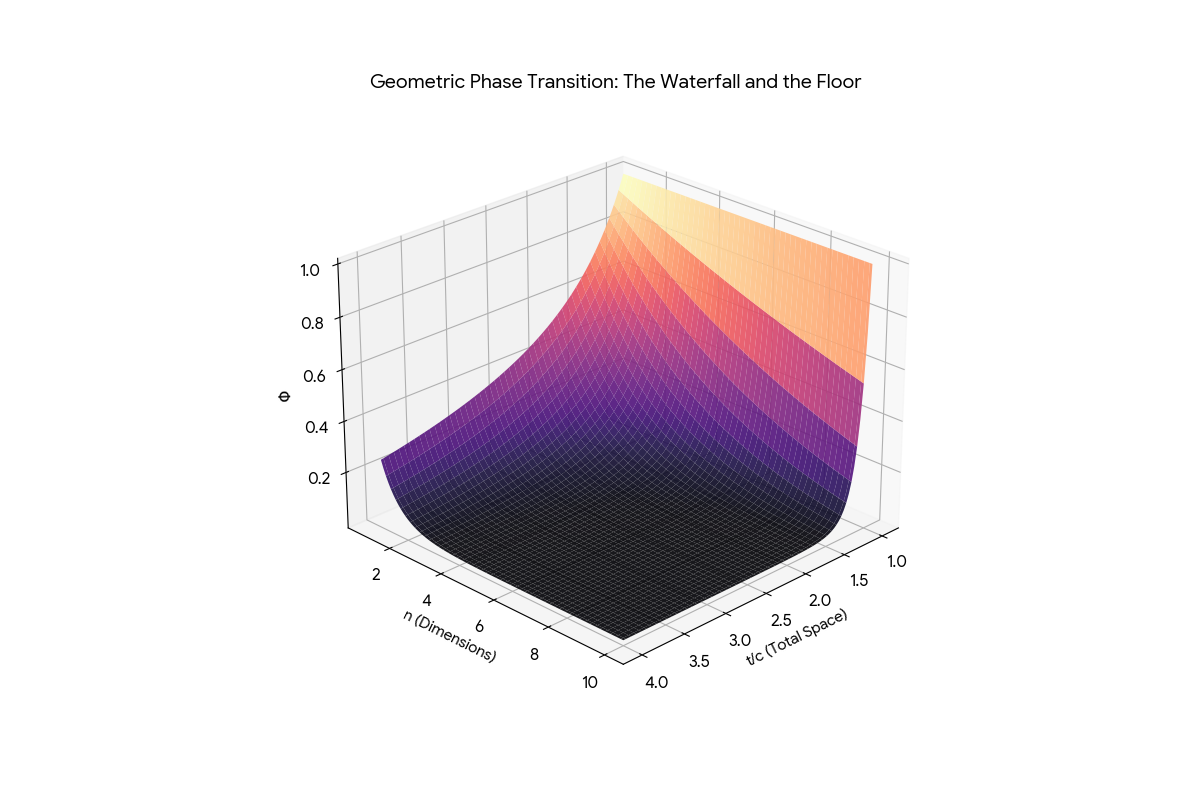

Three AI engines plotted (c/t)^n in three dimensions. All three found the same thing: a vertical wall of chaos, a flat floor of grounded signal, and a razor-sharp boundary between them.

The Waterfall and Floor. Chaos on the left. Grounded signal on the right. The boundary between them is exact, closed-form, and measurable.

We published the proof. We published the steelman of Newport. We published the DSSM tally. What we did not do -- until now -- is connect them.

The waterfall is not an illustration. It is the answer to the question both Newport and Yudkowsky are circling: Where does intelligence stop working?

The honest answer: it depends on what you mean by "this."

If "this" is the specific claim that a superintelligent AI will convert the biosphere into compute -- the waterfall has a precise mathematical answer. Any optimizer that destroys its grounding dimensions to maximize processing power crosses the zone boundary in the wrong direction. It moves from the Floor (coherent signal) to the Wall (saturated noise). The (c/t)^n penalty is not a metaphor. It is a power law. At n=10, a 5% drop in grounding ratio destroys 40% of filtering efficiency. The Computronium Paradox is not a thought experiment. It is Theorem 1 applied to substrate.

If "this" is the broader question -- are current AI systems dangerous? -- the waterfall answers differently. Not because they are too smart. Because they are in the wrong zone. They are operating on the Wall side of the phase transition, generating confident text from saturated noise, and the systems downstream treat that text as signal from the Floor.

A critical nuance: (c/t)^n calculates the signal that survives. Its inverse -- 1 minus (c/t)^n -- calculates the entropy that accumulates. Every ungrounded synthesis hop injects approximately k_E = 0.003 of noise into the system. In a linear system, that is negligible. In a recursive system -- an AI agent looping through chain-of-thought, a RAG pipeline chaining retrievals, a superintelligence planning at planetary scale -- the noise compounds geometrically. By hop 100, the accumulated entropy is 26%. By hop 500, it is 77%. The system does not know it has collapsed. It keeps generating text. But the structural coherence of its output crossed below the phase transition hundreds of hops ago. It is generating from the Wall. Every token is noise shaped into grammar.

The waterfall does not tell you whether superintelligence is possible. It tells you exactly where the boundary is between systems that can think coherently and systems that cannot -- regardless of how much compute they have. And it gives you the inverse: the entropy curve that tells you exactly when the collapse happened, even if the output still looks like English.

When Cal Newport says AI is "unpredictable, not uncontrollable," he is describing a system operating on the Wall side of the waterfall. The Word Guesser architecture he dissects -- layer after layer of statistical pattern-matching, each one annotating text with no persistent state between calls -- is a system with fixed grounding dimensions. n is whatever the training data encoded. It does not increase at runtime. It does not adapt to context it has never seen.

Newport's Golden Retriever with a Weed Whacker is not just vivid. It is geometrically precise. A system with low n thrashes. Its filtering is gentle -- the curve at n=1 is a slope, not a cliff. Noise leaks through at every scale. The system is not malicious. It is not escaping. It is matching patterns in a zone where the physics of its information architecture cannot support coherent intent.

The capture-the-flag story that Yudkowsky presented as evidence of alien planning? Newport showed the receipts: the model matched documents about server troubleshooting. The Anthropic Claude Opus 4 "blackmail" story? Newport showed that too -- Anthropic gave the model a long scenario about an engineer having an affair, presented two branching options, and the model sometimes chose the dramatic one. As Newport put it: "This is not an alien mind trying to break free. It is a word guesser hooked up to a simple control program. You give it a story, it tries to finish it."

This mismatch between apparent capability and actual zone is what generates Trust Debt. We see this in current "breakthroughs" like Alpha Schools—which Newport identifies as automation of standard worksheets and YouTube videos rather than a teaching breakthrough. It is Regime A automation: providing the summary (output) without the grounding (actual pedagogy). It is signal-shaping on the Wall side of the waterfall.

Ninety-five percent of these scare stories are fanfiction. The institution publishes the output as evidence of emergent behavior. The press reports the framing. The public absorbs the framing as fact. That is semantic drift with institutional amplification.

Yudkowsky's argument requires a system that has crossed the zone boundary and kept going -- past the Floor, past human-level coherence, into a regime where the optimizer can recursively improve itself without limit. He is describing something that operates with unbounded n, in a zone where every additional dimension of context collapses noise faster than noise can accumulate.

The Skip Formula has something specific to say about this. Theorem 3 -- the sqrt(2) Law -- proves that each additional dimension of grounding gives linear improvement in filtering efficiency. There is no ceiling. The returns do not diminish. If you can keep adding grounding dimensions, you can keep improving.

But Theorem 1 constrains where the boundary is. And the Computronium Paradox constrains what happens when you try to cross it by destroying the structures that provide your grounding. Yudkowsky's scenario requires the ASI to be smart enough to conquer physics but too unaware to notice it is lobotomizing its own information architecture in the process. The waterfall says: you cannot be on the Floor and destroy the Floor simultaneously. The zone boundary is not a suggestion. It is a power law.

There is a deeper lock on this. The equation E = 1/(1+E) -- the recursive definition of the Golden Ratio -- defines the only stable orbit for a recursively self-improving system. In dynamical systems (KAM Theory), when a complex recursive process hits rational resonance, errors compound infinitely and the system shakes apart. The mathematically proven safest orbit -- the one farthest from any rational fraction -- is 1/phi, which equals 0.618. The Golden Hinge. If the ASI pushes past that efficiency threshold by removing physical friction (destroying biology to build frictionless Computronium), its internal optimization hits resonance. The errors do not average out. They vibrate the system into the Chaos Wall.

In 1992, Vernor Vinge published A Fire Upon the Deep -- a novel built on a single premise that the physics community never took seriously and the AI safety community never engaged with at all: intelligence is physically zoned.

In Vinge's galaxy, the laws of physics change with distance from the galactic core. Near the core -- the Unthinking Depths -- computation above a certain complexity is physically impossible. Moving outward, you enter the Slow Zone, where limited computation works but faster-than-light communication does not. Further out, the Beyond, where advanced AI and FTL operate. And at the edge, the Transcend, where god-like Powers emerge.

The crucial insight is not the taxonomy. It is the physics. A Power from the Transcend that drifts inward loses its mind. Not because it chose to. Because the zone it entered does not support the computation its thoughts require. The same entity, the same hardware, the same intent -- rendered incoherent by the physics of the medium.

This is the better framing for what happens to systems that operate without grounding. They are not broken. They are not malicious. They are zoned out -- operating in a region of information space where the physics does not permit the coherence their output pretends to possess.

Vinge's Zones of Thought are not science fiction. They are the geometry of (c/t)^n rendered as narrative. Every system you build has a zone. Every system you deploy operates in a regime. The question is never "Is this system intelligent?" The question is: "What zone is this system in, and does the physics of that zone support what we are asking it to do?"

If the waterfall is the map, then the political question becomes: which zone are we building in, and do the people making decisions understand the boundary?

The current regulatory conversation is stuck in Yudkowsky's frame -- capability caps, compute thresholds, kill switches. All of these assume the danger is a system that crosses from the Beyond into the Transcend. The waterfall says the actual crisis is the opposite: systems that are already in the Unthinking Depths being deployed as though they have crossed into the Beyond.

Newport's frame is closer to correct -- focus on the real problems, now, here -- but without the map, "focus on real problems" is a vibe, not a policy. The waterfall gives the vibe coordinates.

What must be understood, and what the politics has not yet absorbed:

- The Zone Boundary is Measurable: The (c/t)^n formula gives an exact threshold for any system. You do not need to guess whether a system is "aligned." You need to measure whether it is operating above or below its phase transition.

- Scaling sideways along the Wall is a Bubble: Adding compute without adding grounding dimensions does not cross the boundary. It moves you along the Wall. The capex vs. revenue mismatch identified by analysts like Ed Zitron is the market's way of pricing the Thermodynamic Floor. AI companies are spending billions on compute while revenue plateaus because ungrounded symbols cannot sustain high-value enterprise utility.

- The "Victor" Penalty: Using ungrounded LLMs to mask laziness (skipping the development of "rare and valuable skills") is a form of Personal Trust Debt. If you accept a 0.3% error rate (k_E = 0.003) per boundary crossing to save time, your professional authority has a half-life of 231 boundary crossings. You aren't just being lazy; you are zoning yourself out into the Unthinking Depths.

Current AI architectures are not fixable by alignment or guardrails. They are in the wrong zone because the architecture is the wrong zone. The only systems that can be measured, audited, and held to the phase transition are systems built on the Unity Principle (S=P=H) from the ground up.

Here is what we have actually done, and what it weighs.

Cal Newport Got It Right -- Then Stopped One Layer Short: A section-by-section steelman of Newport's case. The verdict: Newport diagnosed the problem. He did not yet have the instrument panel.

DSSM: The Thermodynamic Culling of Newport and Yudkowsky: A Double-Sided Steelman. Newport at 4.5x (the Philosopher's Fallacy is real). Yudkowsky at 0.2x (the Computronium Paradox is self-defeating). ThetaDriven at 6.5x (grounding, not control, is the lever).

The Skip Formula: Three AIs, One Proof: Three independent AI engines deriving the same closed-form phase transition. The sqrt(2) Law discovered. The waterfall plotted, the knee located, the engineering specification delivered.

And now this post, which asks the question the other three were building toward: Does the geometry mean anything beyond the math?

We should be precise about what we are saying and what we are not.

We are not saying that superintelligence is impossible. Vinge's Transcend is not ruled out by the physics. It is ruled out only by the Computronium Paradox. If there is a way to gain grounding and compute simultaneously, the paradox dissolves.

We are not saying that current AI systems are safe. The opposite. The waterfall proves that systems operating on the Wall side generate output that is indistinguishable from Floor-side output to an observer who does not know the zone boundary exists. Every system deployed without zone measurement is a trust bomb.

Passive survival is a myth. We must build the S=P=H architecture now. We win by building the floor the machine is forced to stand on. This is the biological implementation of the Skip Formula — what Newport calls the Lincoln Protocol:

"Improve your mind. Use your improved mind to do something useful and then repeat. Improve it even more. Do something more useful."

By iterating between Semantics (improving the mind) and Physics (doing a useful, physical thing), we recursively add grounding dimensions. Lincoln didn't have a "Grand Vision"; he had a Continuous Grounding Loop that allowed him to navigate the Beyond.

The debate between Newport and Yudkowsky is a debate about zones.

Newport says we are in the Slow Zone. Yudkowsky says we are building something that will reach the Transcend. Both are right about the physics and wrong about the geography. The systems we deploy today are not operating in the Unthinking Depths because they are flawed. They are there because the information architecture that houses them was designed without knowledge of the boundary.

What zone is your system in? And what happens to the people downstream when the answer is the wrong one?

Fire Together. Ground Together.

Ready for your "Oh" moment?

Ready to accelerate your breakthrough? Send yourself an Un-Robocall™ • Get transcript when logged in

Send Strategic Nudge (30 seconds)