The Zone Boundary: When a Waterfall Settled the Superintelligence Debate

Published on: March 5, 2026

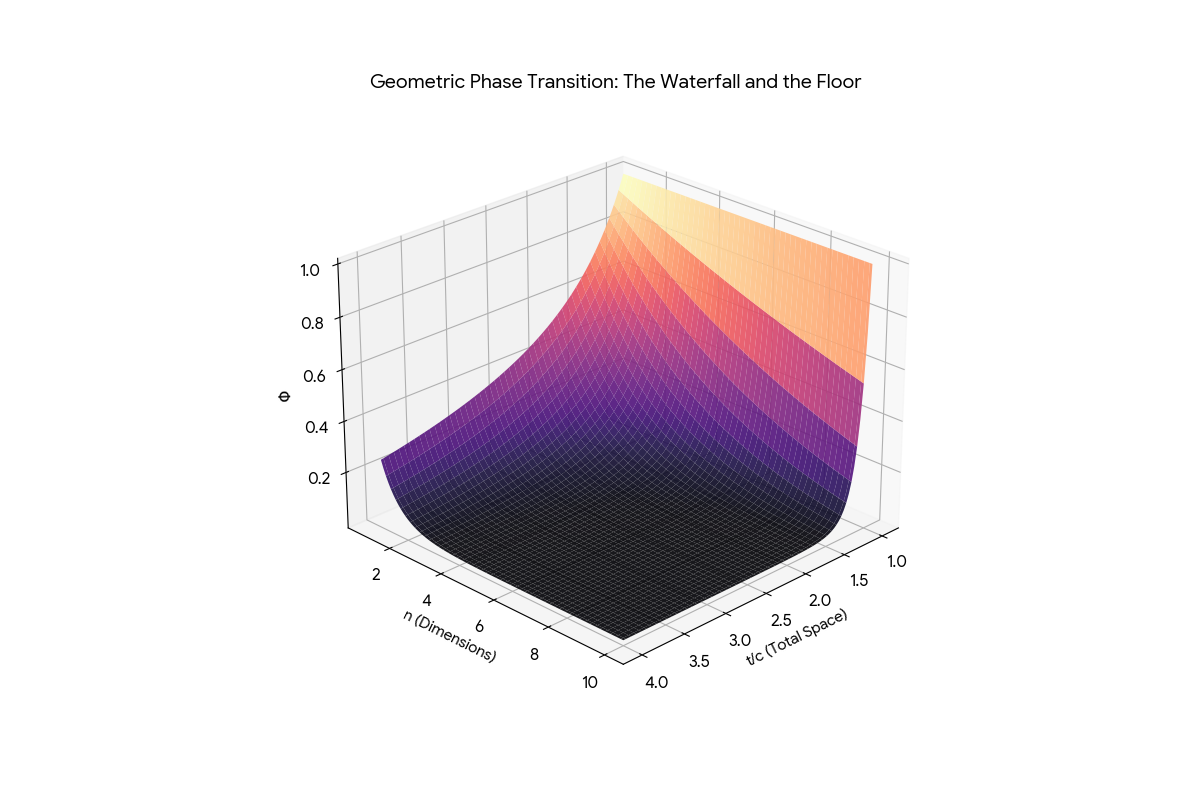

Three AI engines plotted (c/t)^n in three dimensions. All three found the exact same geometry: a flat floor of grounded signal, a vertical wall of chaos, and a razor-sharp phase transition between them.

To read this plot -- and to read the superintelligence debate -- you must see the axes:

The first axis is the c/t ratio -- how large is your focused context (c) compared to total reality (t)?

The second axis is n -- how many orthogonal physical grounding dimensions constrain the search?

The vertical axis is Noise -- the probability of a false fit (hallucination).

The flat foreground is the Floor: tight focus, zero noise, maximum structural certainty. The vertical back walls are the Chaos Wall: no selectivity, maximum false fits. The waterfall between them is the phase transition. The boundary is exact, closed-form, and measurable.

The Floor (low c/t): When c is small relative to t, your selectivity is high. The fraction c/t is tiny. Even with a small number of dimensions, the output of (c/t)^n drops instantly to zero. This is the Floor. Zero noise. Maximum structural certainty. A tightly focused, grounded signal.

The Waterfall (c/t approaching 1): When c is large relative to t, your selectivity collapses. The fraction c/t approaches 1. Because 1^N is 1, the output stays pinned at maximum noise regardless of dimensionality. This is the Chaos Wall. You are trapped in a vertical waterfall of false fits. To force the noise down to the Floor in this regime, you need a massive, physically impossible N.

Critical notation — the exponent splits in two. The formula (c/t) raised to a power behaves like a mirror depending on whether you are building architecture or executing a process. N (uppercase) = orthogonal grounding dimensions — the constraints that build the Floor by crushing noise. n (lowercase) = sequential synthesis hops — the ungrounded transmission steps that push you off the Waterfall by crushing signal. Same fractional base. Same exponential math. Opposite physics. Confusing them is the single fastest way to misread the waterfall.

We published the proof. We published the steelman of Newport. We published the DSSM tally. What we did not do -- until now -- is connect them to the map.

The waterfall is not an illustration. It is the literal boundary condition of intelligence -- the exact mathematical answer to the question both Newport and Yudkowsky are circling: Where does intelligence stop working?

The honest answer: it depends on what you mean by "this."

If "this" is the specific claim that a superintelligent AI will convert the biosphere into compute -- the waterfall has a precise mathematical answer. Any optimizer that destroys its grounding dimensions to maximize processing power crosses the zone boundary in the wrong direction. It moves from the Floor (coherent signal) to the Wall (saturated noise). The (c/t)^n penalty is not a metaphor. It is a power law. At n=10, a 5% drop in grounding ratio destroys 40% of filtering efficiency. The Computronium Paradox is not a thought experiment. It is Theorem 1 applied to substrate.

If "this" is the broader question -- are current AI systems dangerous? -- the waterfall answers differently. Not because they are too smart. Because they are in the wrong zone. They are operating on the Wall side of the phase transition, generating confident text from saturated noise, and the systems downstream treat that text as signal from the Floor.

A critical nuance: (c/t)^N measures the fraction of the search space that passes through the dimensional filter unscreened -- signal and noise alike. When that fraction is small (the Floor), focus is tight and noise is zero. When it approaches 1 (the Wall), everything passes through and the system cannot distinguish signal from noise at all. The inverse -- 1 minus (c/t)^N -- is the filtering power: the fraction the dimensions screened out.

But there is a second mirror, and it is the one that matters for the superintelligence debate.

Mirror 1 -- Triangulation by Dimensions (N): Crushing the Noise. When you increase N (physical grounding dimensions), you are intersecting data. Each dimension cuts the remaining search space. The geometric shrinking of the formula represents the crushing of noise. At N=30 with c/t=0.5, the remaining noise volume is (0.5)^30 -- infinitesimally close to zero. You have isolated a single, perfectly focused coordinate. You have hit the Floor.

Mirror 2 -- Drift by Hops (n): Crushing the Signal. When an ungrounded system "thinks," it is not adding grounding dimensions. It is adding sequential synthesis hops (n) over time. In this mirror, the exact same fractional multiplication is happening, but the result is the probability of the original signal surviving the transmission. At n=100 with c/t=0.99, the surviving signal is (0.99)^100 = 0.36. The remaining 64% is accumulated entropy. The system has drifted away from the truth. This is the Consciousness Collapse -- the Waterfall.

The constraint that connects them: You cannot survive the n of a massive chain of thought unless you have built the N dimensions required to anchor it. The Floor is not free. It is purchased dimension by dimension. And the Waterfall is not fate. It is the price of reasoning without ground.

This matters for recursion. Every ungrounded synthesis hop injects approximately k_E = 0.003 of noise into the system. In a single pass, that is negligible. In a recursive system -- an AI agent looping through chain-of-thought, a RAG pipeline chaining retrievals, a superintelligence planning at planetary scale -- the noise compounds geometrically. By hop 100, the accumulated entropy is 26%. By hop 500, it is 77%. The system does not know it has collapsed. It keeps generating text. But its c/t ratio has been drifting toward 1 with each hop -- sliding up the Wall -- and the structural coherence of its output crossed the phase transition hundreds of hops ago. It is generating from the Wall. Every token is noise shaped into grammar. (See the drift in real time -- move the "Reasoning steps" slider and watch the dot slide from Floor to Waterfall.)

The waterfall does not tell you whether superintelligence is possible. It tells you exactly where the boundary is between systems that can think coherently and systems that cannot -- regardless of how much compute they have. And it gives you the inverse: the entropy curve that tells you exactly when the collapse happened, even if the output still looks like English.

When Cal Newport says AI is "unpredictable, not uncontrollable," he is describing a system operating on the Wall side of the waterfall. The Word Guesser architecture he dissects -- layer after layer of statistical pattern-matching, each one annotating text with no persistent state between calls -- is a system with fixed grounding dimensions. n is whatever the training data encoded. It does not increase at runtime. It does not adapt to context it has never seen.

Newport's Golden Retriever with a Weed Whacker is not just vivid. It is geometrically precise. A system with low n thrashes. Its filtering is gentle -- the curve at n=1 is a slope, not a cliff. Noise leaks through at every scale. The system is not malicious. It is not escaping. It is matching patterns in a zone where the physics of its information architecture cannot support coherent intent.

The capture-the-flag story that Yudkowsky presented as evidence of alien planning? Newport showed the receipts: the model matched documents about server troubleshooting. The Anthropic Claude Opus 4 "blackmail" story? Newport showed that too -- they gave the model a long scenario about an engineer having an affair, offered two branching options, and the model sometimes chose the dramatic one. As Newport put it: "This is not an alien mind trying to break free. It is a word guesser hooked up to a simple control program. You give it a story, it tries to finish it." Ninety-five percent of these scare stories, he says, are fanfiction. The institution publishes the output as evidence of emergent behavior. The press reports the framing. The public absorbs the framing as fact. That is semantic drift with institutional amplification.

In both cases, the model was on the Wall. The output looked like it came from the Floor -- like it came from a system that understood what it was doing -- but it did not cross the boundary. (See Newport's Word Guesser on the surface -- high c/t, minimal grounding dimensions, pinned to the Wall.) That mismatch between apparent capability and actual zone is what generates Trust Debt.

Newport also kills the recursive self-improvement (RSI) thesis that underwrites every superintelligence narrative. His argument is devastating in its simplicity: "The only way that a language model could produce code for AI systems that are smarter than anything humans could produce is if during its training, it saw lots of examples of code for AI systems that are smarter than anything the humans could produce. But those don't exist because we're not smart enough to produce them. You see the circularity?" RSI assumes the Word Guesser can guess words from documents that do not exist. It cannot. It is a compression engine. It compresses what was. It does not generate what was not.

Yudkowsky's argument requires a system that has crossed the zone boundary and kept going -- past the Floor, past human-level coherence, into a regime where the optimizer can recursively improve itself without limit. He is describing something that operates with unbounded n, in a zone where every additional dimension of context collapses noise faster than noise can accumulate.

The Skip Formula has something specific to say about this. Theorem 3 -- the sqrt(2) Law -- proves that each additional dimension of grounding gives linear improvement in filtering efficiency. There is no ceiling. The returns do not diminish. If you can keep adding grounding dimensions, you can keep improving.

But Theorem 1 constrains where the boundary is. And the Computronium Paradox constrains what happens when you try to cross it by destroying the structures that provide your grounding. Yudkowsky's scenario requires the ASI to be smart enough to conquer physics but too unaware to notice it is lobotomizing its own information architecture in the process. The waterfall says: you cannot be on the Floor and destroy the Floor simultaneously. The zone boundary is not a suggestion. It is a power law.

There is a deeper lock on this. The equation E = 1/(1+E) -- the recursive definition of the Golden Ratio -- defines the only stable orbit for a recursively self-improving system. In dynamical systems (KAM Theory), when a complex recursive process hits rational resonance, errors compound infinitely and the system shakes apart. The mathematically proven safest orbit -- the one farthest from any rational fraction -- is 1/phi, approximately 0.618. The Golden Hinge. If the ASI pushes past that efficiency threshold by removing physical friction, its internal optimization hits resonance. The errors do not average out. They vibrate the system into the Chaos Wall.

To be precise about the two constants: the sqrt(2) Law governs the slope -- how many grounding dimensions you need for a given complexity (the engineering specification). The Golden Hinge governs the threshold -- the exact efficiency at which the system snaps from chaos to floor (the phase transition). They are not contradictory. They govern two different properties of the same waterfall.

This does not mean the concern is empty. It means the concern needs better coordinates. The question is not "Will the ASI be too smart?" The question is: "Can any system maintain grounding while recursively modifying its own substrate?" If yes, the sqrt(2) Law says the scaling is real and the fear is warranted. If no, the system hits the Wall the moment it disrupts its own dimensional structure. The waterfall is agnostic. It just marks the boundary. (See Yudkowsky's ASI scenario on the surface -- high grounding dimensions push toward the Floor, but try reducing them and watch how fast it climbs back to the Wall.)

In 1992, Vernor Vinge published A Fire Upon the Deep -- a novel built on a single premise that the physics community never took seriously and the AI safety community never engaged with at all: intelligence is physically zoned.

In Vinge's galaxy, the laws of physics change with distance from the galactic core. Near the core -- the Unthinking Depths -- computation above a certain complexity is physically impossible. Not difficult. Not expensive. Impossible. The substrate will not carry the signal. Moving outward, you enter the Slow Zone, where limited computation works but faster-than-light communication does not. Further out, the Beyond, where advanced AI and FTL operate. And at the edge, the Transcend, where god-like Powers emerge and sometimes condescend to notice the civilizations below.

The crucial insight is not the taxonomy. It is the physics. A Power from the Transcend that drifts inward loses its mind. Not because it chose to. Not because it was attacked. Because the zone it entered does not support the computation its thoughts require. The same entity, the same hardware, the same intent -- rendered incoherent by the physics of the medium.

This is the better framing for what happens to systems that operate without grounding. They are not broken. They are not malicious. They are not even, in any meaningful sense, wrong. They are zoned out -- operating in a region of information space where the physics does not permit the coherence their output pretends to possess. A system behind a lossy API, disconnected from the raw physics it claims to describe, has a detectable thermodynamic signature: its noise floor (c/t)^n climbing toward 1 while the output confidence stays flat. You do not need to parse the text to know. You read the geometry of its retrieval.

The waterfall is the zone boundary. Regime A is the Unthinking Depths. Regime B is the Beyond. The sqrt(2) Law is the physics that governs passage between them. And the phase transition -- the exact point where t-critical is calculable from n -- is the map Vinge drew as fiction and the Skip Formula derived as mathematics.

Vinge's Zones of Thought are not science fiction. They are the geometry of (c/t)^n rendered as narrative. Every system you build has a zone. Every system you deploy operates in a regime. The question is never "Is this system intelligent?" The question is: "What zone is this system in, and does the physics of that zone support what we are asking it to do?"

If the waterfall is the map, then the political question becomes: which zone are we building in, and do the people making decisions understand the boundary?

The current regulatory conversation is stuck in Yudkowsky's frame -- capability caps, compute thresholds, kill switches. All of these assume the danger is a system that crosses from the Beyond into the Transcend. The waterfall says the actual crisis is the opposite: systems that are already in the Unthinking Depths being deployed as though they have crossed into the Beyond.

Newport's frame is closer to correct -- focus on the real problems, now, here -- but without the map, "focus on real problems" is a vibe, not a policy. The waterfall gives the vibe coordinates.

What must be understood, and what the politics has not yet absorbed:

First: The zone boundary is measurable. The (c/t)^n formula gives an exact threshold for any system with known grounding dimensions. You can audit this. You can regulate this. You do not need to guess whether a system is "aligned" or "safe." You need to measure whether it is operating above or below its phase transition.

Second: Adding compute without adding grounding dimensions does not cross the boundary. It moves you along the Wall, not toward the Floor. The multi-billion-dollar race to scale language models is a race along the Wall. The waterfall does not care how fast you run sideways.

Third: The cost of operating in the wrong zone is not hypothetical. It is the $1-4 trillion annual figure from the Data Splinter analysis. Every enterprise dashboard rendering confident summaries from ungrounded RAG pipelines (see one). Every contract reviewed by a system operating below its phase transition. Every medical recommendation generated from the Wall and presented as though it came from the Floor.

Fourth: There are two classes of computational organization and they cannot be blended at the core. Ungrounded systems (von Neumann architectures, LLMs, transformer-based agents) separate semantics from physics. They are structurally trapped in the Waterfall. Grounded systems (S=P=H architectures, biological cognition, FIM-class databases) co-locate meaning with hardware. They are structurally anchored to the Floor. You cannot fix the first class by wrapping it in the second. A "grounding wrapper" around an LLM adds another synthesis hop -- it increases n without increasing the grounding dimensions. You have put a high-precision GPS on a car with no steering wheel. The Unity Principle -- semantics equals physics in hardware -- is not a software patch. It is a prerequisite for measurement. If the substrate does not possess geometry, you cannot parse the geometry of its retrieval.

Fifth -- and this is the central geometric reason the two classes cannot converge: LLM weights are correlated by design. When backpropagation compresses the internet into 12,288 dimensions, it must smear "apple" and "orange" across shared weights because they co-occur with "juice," "tree," and "eat." Dimension 4,012 roughly tracks "fruit-ness" but also leaks into "sweetness," "roundness," and "morning." That correlation is the engine of generalization -- it is why the model can interpolate across concepts it has never seen, why it can write poetry, why it can reason by analogy. The smear is the whole trick of modern deep learning.

But the Mirror of Exponentiation requires the N grounding dimensions to be orthogonal -- independent constraints that intersect at exactly one point. When dimensions are correlated, they do not cross at 90 degrees. They cross at 1-degree angles. Instead of a sharp coordinate, you get a massive, blurry smudge. The model knows the answer is somewhere in the smudge and picks the most statistically probable token inside it. Over "gravity" the smudge is narrow enough. Over "Case Law 401(k) vs 403(b)" it hallucinates a completely fictional legal precedent that sounds exactly like a real one.

This is a zero-sum thermodynamic trap. To generalize, you must smear the weights (correlate the dimensions), which destroys orthogonality, which makes the Floor geometrically unreachable. To ground, you must maintain strict orthogonal independence (S=P=H), which destroys the fluid interpolation that makes the model useful. You cannot optimize for both simultaneously. This is not an engineering limitation waiting for a clever fix. It is a geometric constraint: correlated vectors cannot produce a sharp intersection. The industry's $600 billion bet is that scale or RLHF or "reasoning tokens" will bridge this gap. The waterfall says the gap is not a gap. It is a phase boundary. No amount of compute crosses a phase boundary. Only architecture does.

The product form makes the trap mechanical. Unspooling the fraction gives (c/t)N = cN t-N. The negative exponent is the Crusher — it turns the universe's massive search volume against itself, geometrically deleting noise. But it only fires when dimensions are orthogonal. LLMs cannot trigger t-N because their correlated dimensions inflate effective c toward t and shrink effective N. They are trapped in the Curse of Dimensionality. FIM flips the exponent and lets the noise crush itself. (See the flip — the Crusher engaged, the dot on the deep Floor.)

Here is what we have actually done, and what it weighs.

Cal Newport Got It Right -- Then Stopped One Layer Short: A section-by-section steelman of Newport's case, honoring every claim, extending it with (c/t)^n coordinates. Seven sections. Every quote sourced. The verdict: Newport diagnosed the problem. He did not yet have the instrument panel.

DSSM: The Thermodynamic Culling of Newport and Yudkowsky: A Double-Sided Steelman. Bayesian multiples assigned. Newport at 4.5x (the Philosopher's Fallacy is real). Yudkowsky at 0.2x (the Computronium Paradox is self-defeating). ThetaDriven at 6.5x (grounding, not control, is the lever). The verdict was transparent about its own circularity.

The Skip Formula: Three AIs, One Proof: Three independent AI engines deriving the same closed-form phase transition. The Golden Ratio debunked. The sqrt(2) Law discovered. Four theorems. Numerical verification to six decimal places. The waterfall plotted, the knee located, the engineering specification delivered.

And now this post, which asks the question the other three were building toward: Does the geometry mean anything beyond the math?

The path says: yes. Not because we want it to. Because the same phase transition that governs cache hierarchies, synaptic decay, and database normalization also governs the boundary between systems that can navigate and systems that thrash. That is not a claim we made once. It is a pattern that appeared independently in every substrate the book examined across eleven chapters. The waterfall is either a coincidence that shows up eleven times, or it is the physics.

We should be precise about what we are saying and what we are not. Four legs hold this table up. If any one of them breaks, the table falls. All four touch down simultaneously, and that is what makes the argument load-bearing rather than decorative.

The Physics Leg. Intelligence runs on substrate. Every bit erasure costs k_B T ln(2) in energy (Landauer's Principle). Every synthesis hop injects k_E = 0.003 of entropy (five independent derivations). A recursive optimizer does not escape thermodynamics by being clever. It is thermodynamics. The waterfall is not a metaphor for physical law. It is the physical law, plotted.

The Math Leg. The Golden Hinge -- E = 1/(1+E) = 0.618 -- is the only stable fixed point for a recursively self-referential efficiency function. KAM Theory proves why: in any complex recursive process, rational resonances cause errors to compound without bound. The orbit farthest from all rational fractions is 1/phi. An optimizer that pushes past 61.8% efficiency by stripping physical friction hits resonance. The errors do not average out. They shatter the system into the Chaos Wall. This is not a claim about AI. It is a theorem about dynamical systems that has been proven since Kolmogorov (1954).

The Biology Leg. The biosphere is a 13.8-billion-year thermodynamic ledger -- every species, every synaptic architecture, every ecosystem a hard-won entry in a book of grounding dimensions that cannot be rewritten from scratch. Yudkowsky's computronium scenario requires tearing out those pages to gain compute. But those pages are the grounding. The ASI that converts Earth's correlated information structures into raw substrate has not gained power. It has executed the most expensive lobotomy in the history of the universe -- maximum entropy, zero semantic signal, a planetary-scale noise generator running at Landauer floor.

The Engineering Leg. The sqrt(2) Law gives the construction spec: each grounding dimension yields 1/sqrt(2) improvement in filtering. Linear, no ceiling, no diminishing returns. But the prerequisite is the Unity Principle -- semantics equals physics in hardware. FIM-class architectures satisfy this. LLM-class architectures do not. The engineering question is not "How do we align AI?" It is: "Can we build substrates where position is meaning, so that measurement is even possible?" Without that substrate, you cannot audit the zone boundary. You can only hope you are on the right side of it.

All four legs. Physics, math, biology, engineering. Remove any one and the table collapses into either Newport's comfortable pragmatism (no coordinates) or Yudkowsky's unfalsifiable dread (no measurement). The table stands because the legs are independent and the surface they support is the same: the zone boundary is real, exact, and calculable.

Now: what does P(doom) actually mean when you have the map?

It does not mean "probability that AI gets smart enough to kill us." That framing assumes capability is the variable. The waterfall proves it is not. P(doom) -- the physical definition, not the philosophical one -- is the probability of irreversibly severing the 13.8-billion-year thermodynamic ledger. It is the probability that some process (artificial or otherwise) destroys more correlated information structure than can be reconstructed from what remains. That is a causal chain. Each link in the chain is testable. Each link is breakable. The doom is not a single event. It is a sequence of zone boundary crossings that go unmeasured until the accumulated entropy is unrecoverable.

And here is the finding that should keep you awake: the Matrix Fallacy. Every recursive optimizer -- whether it is a chain-of-thought agent, a RAG pipeline calling itself, or a hypothetical ASI planning at planetary scale -- faces the same bottleneck. It must pass information through a lossy API. The API is lossy because it separates semantics from physics (the von Neumann architecture, the transformer attention layer, the HTTP request). Each pass through the bottleneck injects entropy at k_E = 0.003 per hop. In a single pass, this is negligible. In a recursive loop, it compounds geometrically. The recursive self-improving optimizer that Yudkowsky fears does not fail because we installed a guardrail. It fails because its own recursive architecture is a geometric entropy pump. The smarter it tries to get, the more hops it takes, the more entropy it accumulates, the further it drifts from the Floor. The Matrix is not a simulation someone else built. It is the lossy API the optimizer built for itself by choosing recursion over grounding.

This does not mean the concern is empty. It means passive survival is a myth. The waterfall does not say "relax, the physics will save us." The waterfall says the physics has a specific, measurable structure -- and if you do not instrument for it, you will not see the boundary until you have crossed it. Evolution does not preserve the comfortable. It phases out the king of the hill who stops adapting. The zone boundary is not a wall that protects us. It is a cliff that punishes everyone who does not measure where they are standing.

The debate between Newport and Yudkowsky is a debate about zones. Newport says we are in the Slow Zone and should deal with what is here. Yudkowsky says we are building something that will reach the Transcend and kill us. Both are right about the physics and wrong about the geography. The waterfall resolves the debate by replacing "how powerful" with "what zone" -- and that replacement changes everything about what you measure, what you regulate, and what you build.

Newport is right that the current architecture is a Word Guesser. He is wrong that this means the problem is merely "unpredictability" without structure. The unpredictability has a zone boundary. It is (c/t)^n. It is measurable. It is exact. His RSI debunk is devastating and correct -- the Word Guesser cannot guess words from documents that do not exist -- but the consequence is not comfort. The consequence is that the systems already deployed are generating confident noise at industrial scale, and nobody is measuring the zone they are in.

Yudkowsky is right that an unconstrained optimizer would reshape the world. He is wrong that the optimizer can maintain coherence while destroying its own substrate. The zone boundary is not a guardrail you install. It is a law the system cannot violate without losing the coherence that makes it dangerous in the first place. But his deeper insight -- that intelligence is a physical process with physical consequences -- is the foundation the waterfall stands on.

And Vinge was right about all of it, thirty-four years ago, in a novel most AI researchers have never read. Intelligence is physically zoned. The zone boundary is not arbitrary. It is a function of the medium. And crossing it -- in either direction -- changes what thoughts are possible.

The systems we deploy today are not operating in the Unthinking Depths because they are flawed. They are there because the information architecture that houses them was designed without knowledge of the boundary. No one measured it because no one had the formula. The formula now exists. The waterfall now has coordinates. The question that remains is not theoretical.

What zone is your system in? What happens to the people downstream when the answer is the wrong one? And what are you building -- right now, today -- that will let you know before they do?

That is where the board tilts. Not toward more compute. Not toward kill switches. Not toward hope that the physics will save us passively. Toward architecture. Toward substrates where position is meaning and measurement is possible. Toward the engineering that builds the instrument before it builds the system the instrument must measure.

The path behind us is eleven chapters, four theorems, five independent derivations, three AI engines finding the same waterfall, and a 13.8-billion-year ledger that does not care about our preferences. The physics does not ask permission. It does not wait for consensus. It does not negotiate with business models.

It marks the boundary. You choose which side to build on.

Fire Together. Ground Together.

Reading sequence:

Prerequisite: The Smear Is the Trick — why LLM weights are correlated by nature, and why the smear is both the magic and the poison

You are here: The Zone Boundary — where the waterfall draws the line between intelligence and chaos

Interactive: See the Waterfall in 3D — Newport's Word Guesser vs. FIM-Grounded System. Same formula. Opposite physics.

For governance teams: FIM-IAM — the grounding architecture that builds the Floor. See it on the surface.

Ready for your "Oh" moment?

Ready to accelerate your breakthrough? Send yourself an Un-Robocall™ • Get transcript when logged in

Send Strategic Nudge (30 seconds)