Permission = Alignment: How ThetaSteer Proves the IAMFIM Patent in 2,847 Lines of Rust

Published on: January 3, 2026

Traditional IAM (Identity Access Management) gives you a static list where User X has Admin Access. Check the list, grant or deny. Simple. Also catastrophically broken for AI agents.

The IAMFIM patent makes a radical claim: "Permission isn't a binary grant. It's a continuous state of Alignment. An entity - human or agent - has permission only as long as its intent is verified against a higher truth."

Sounds philosophical? Maybe. But we just built it in Rust. And it compiles.

Here is the architecture that makes "Permission = Alignment" concrete.

Tier 0 is Ollama (The Loop) which serves as the local LLM running on your machine. It processes every context change in real-time at zero cost beyond compute. Its role functions as System 1 providing fast, reflexive categorization.

Tier 1 is Claude (The Auditor) which serves as the cloud LLM for verification. The system calls it when local confidence drops OR velocity exceeds processing capacity. The cost remains low through API calls. Its role functions as System 2 providing slow, deliberate reasoning.

Tier 2 is Human (The Anchor) which serves as ground truth representing the unchangeable reference frame. The system calls it when Claude is unsure OR drift becomes critical. The cost is high because it requires human attention. Its role represents the "physics" against which all alignment is measured.

The key insight reveals that each tier is a fractal of the one above. Ollama's fast categorization mirrors Claude's reasoning in compressed form. Claude's analysis mirrors Human intent in expanded form. The math is the same at every level.

Here is the math that makes self-correction inevitable:

Confidence_Effective = Confidence_Raw - (0.05 x chain_length)

Every time the LLM makes a decision based on its own previous decisions without external verification, chain_length increments and the confidence penalty grows.

Consider this example. Ollama categorizes with 0.85 confidence. After 8 self-references the calculation becomes 0.85 - (0.05 x 8) = 0.45. The result drops from Green (autonomous) to Red (human review).

After 14 self-references even a perfect 1.0 confidence becomes 0.30 which falls into the Blue zone forcing escalation to Claude.

The system cannot drift indefinitely because the math guarantees periodic re-grounding.

The Problem emerges when you Alt+Tab through 10 windows in 3 seconds while Ollama takes 1-3 seconds per inference. By the time it processes Window 1, you are on Window 10. The system is permanently behind, categorizing ghosts.

The Fix implements Burst Mode through Velocity Escalation. When queue depth exceeds 5 items the system stops processing one-by-one. Instead it aggregates all pending events into a summary and escalates to Claude with one prompt asking "What is the user ACTUALLY doing?" Claude responds with something like "Debugging a runtime error." The result collapses 10 noise events into 1 meaningful signal.

Patent Proof demonstrates that high-frequency operations can be regulated by low-frequency intent. The grid captures meaning, not noise.

The Problem occurs when Ollama miscategorizes "Slack" as "Strategy." In the next loop it reads its own history, sees "Slack = Strategy," and uses that to justify the same wrong categorization. The error becomes a permanent, self-reinforcing rule.

The Fix implements Confidence Decay on Self-Reference through this Rust code:

if meta.machine_streak > 5 && decayed >= 0.7 {

return 0.69; // Force to Red tier

}

If the LLM has made more than 5 decisions without human or Claude verification, it cannot stay in Green (autonomous) tier. The math forces escalation.

Patent Proof confirms that a system cannot permit itself to run indefinitely without external verification. Anti-drift is a mathematical guarantee, not a policy hope.

Here is what nobody is saying out loud: ThetaSteer is the proof-of-concept for agent governance.

Today it monitors humans where the input comes from Screen OCR and Clipboard. Tier 0 has Ollama categorize human activity. Tier 1 has Claude audit uncertainty. Tier 2 has the Human click to ground.

Tomorrow you replace "Screen Monitor" with "Agent Log" where the input comes from Tool calls and API responses. Tier 0 has Ollama categorize agent actions. Tier 1 has Claude audit agent reasoning. Tier 2 has the Human approve agent actions.

The math does not change. If ThetaSteer can keep a human aligned with their own stated goals, the same system can keep an AI agent aligned with its operator's intent.

We are building the brake pedal and steering wheel for AGI. And we are testing it on ourselves first.

This is not vaporware. The Rust daemon builds and runs.

The core functions include run_continuous_loop() for the self-calling refiner running as fast as Ollama can process, get_duty_cycle() for thermal throttling prevention, conflate_queue() for stale context elimination, apply_confidence_decay() for echo chamber prevention, GroundingState for chain length tracking, check_burst_mode() for velocity escalation, verify_categorization() for Claude audit, and summarize_burst() for Gestalt collapse.

The current status shows 34 compiler warnings and zero errors. The patent is executable.

Here is the Grounding Loop running in production:

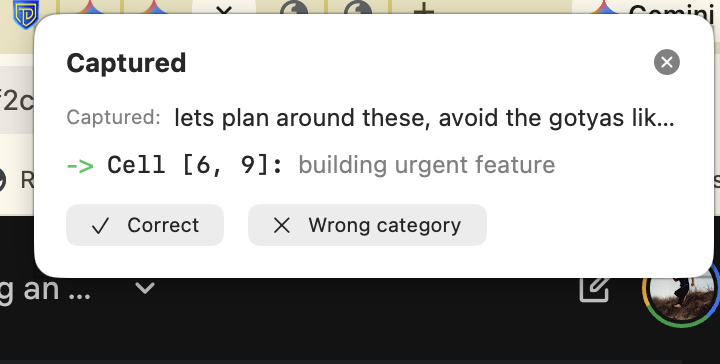

Reading the notification reveals several components. The "Captured:" field shows the raw text the system observed verbatim: "lets plan around these, avoid the gotyas lik..." The system saw this from clipboard or context and asked the Local LLM "What is this about?"

"Cell [6, 9]: building urgent feature" represents the LLM's answer. It parsed the natural language and mapped it to coordinates in the 12x12 grid. The prose "building urgent feature" explains why it chose those coordinates.

The two buttons serve as the Human Anchor providing "Correct" or "Wrong category" options.

What the Local LLM Did demonstrates something interesting that happens when you subdivide the grid this way. The LLM navigated to the correct cell without being trained on the structure. Why?

You can feel the time delta in both dimensions. The Row axis (0-11) spans Strategy (years), Tactics (weeks), Operations (days), and Quick (minutes). Strategy and Tactics are the same kind of thinking just at different time scales. The Column axis (0-11) spans Personal (individual rhythm), Team (collaborative), and Systems (organizational). You can feel how Personal and Systems operate on different time horizons.

Here is what is strange: when you cross two time-like dimensions, the result looks like space. The grid feels navigable. Positions feel like places.

It returned [6, 9] where Row 6 = Tactics.Building represents work that completes in weeks currently in construction phase, and Column 9 = Operations.Urgent represents affecting operational (daily) rhythms needing attention now.

"Plan around gotchas" genuinely exists at that intersection: tactical-scale work (weeks) affecting operational urgency (daily rhythm). The LLM found it because it is really there.

This is S=P=H in action: Semantics (the meaning of the text) equals Position (coordinates [6,9]) equals Hardware (where it is stored). The grid is not representing meaning - it IS meaning.

The Buttons Are the Patent. The Correct button means Human grounds the mapping which becomes Ground Truth. Future agents reference this: "Human approved [6,9] for this pattern." The Wrong button triggers Escalation Protocol, breaks Echo Chamber, and resets grounding age.

One button click. That is Permission = Alignment working.

If you are building AI agents you need a governance framework before regulators mandate one. "The AI just decided" will not satisfy compliance requirements. ThetaSteer's 3-tier model provides the audit trail.

If you are investing in AI recognize that autonomous systems without drift prevention are liabilities. The first company with provable alignment wins the enterprise market. This architecture scales from productivity tools to agent orchestration.

If you are worried about AI safety understand that alignment is not about rules - it is about physics. A system that must re-ground mathematically cannot drift indefinitely. This is the "brake pedal" approach to AI safety.

Traditional Permission operates through simple lookup:

if user.role == "admin" { permit(); }

IAMFIM Permission operates through continuous alignment state:

let alignment = grounding_state.effective_confidence();

match ProcessingTier::from_confidence(alignment) {

Green => permit_autonomous(),

Red => permit_with_audit(),

Blue => suspend_and_escalate(),

}

The difference is fundamental: Traditional permission is a lookup. IAMFIM permission is a continuous function of alignment state.

The precedent we are setting matters. When an AI agent drifts from its operator's intent and causes harm, the question will be: "Did you have a mathematically guaranteed re-grounding mechanism?"

If the answer is "no," you are exposed. If the answer is "yes, and here is the chain_length log showing automatic escalation," you have a defense.

ThetaSteer is not just a productivity tool. It is the reference implementation of provable AI governance. The first company to deploy this at scale sets the standard everyone else will be measured against.

The five claims proven are as follows. First, Permission is Alignment where confidence determines autonomy, not static roles. Second, Alignment is Measurable through Effective = Raw - (0.05 x chain) which quantifies drift. Third, Drift is Self-Correcting because the math guarantees periodic escalation. Fourth, The Structure is Fractal with the same logic at Ollama, Claude, and Human tiers. Fifth, The System Scales working for humans today and agents tomorrow.

The patent is no longer theoretical. It is running on our machines. And it compiles.

See It In Action

ThetaSteer is currently in development. If you are interested in early access to the macOS app, licensing the 3-Tier Grounding Protocol for your agent framework, or a technical deep-dive on the Rust implementation, we would love to hear from you.

Get In Touch

Related Reading

The 3-Tier Grounding System includes Temporal Grounding: Why Time Times Time = Space covering discrete operations with REST and temporal basis for tier transitions, and Metavector Walks: ShortRank and FIM Canonical explaining how LLMs navigate grid position without explicit training.

Physics-Based Alignment includes The Iron Law of AGI Alignment explaining why coherence beats external control, and Everyone Is Red: Liability Stemming covering governance gaps and certification's real meaning.

Patent Foundation includes How the 12x12 Grid Generates Infinite Reach providing mathematical proof of architecture, When the Lock Clicks: Validation IS Verification covering recognition as physical event, and FIM Patent (Appendix) providing the complete technical specification.

Grounding Physics includes Substrate Relativity: Why Your AI Lies and Your Gut Doesn't covering the universal drift constant k_E = 0.003, The First Sapient System explaining what separates recognition from calculation, The Equation That Changes Everything: Trust Debt Revealed deriving the physics of accumulated drift, and The Speed of Trust showing why grounded systems run at the speed of human verification.

The 3-Tier Grounding Protocol is documented in requirements sections 58-61 of the ThetaSteer specification. The Rust implementation is available for technical review upon request.

Ready for your "Oh" moment?

Ready to accelerate your breakthrough? Send yourself an Un-Robocall™ • Get transcript when logged in

Send Strategic Nudge (30 seconds)Continue Your Journey

Themes in This Post

Explore Related Ideas